MONTH 2023

ASSEMBLY LINES

Embodied AI Ushers in a New Robotics Era

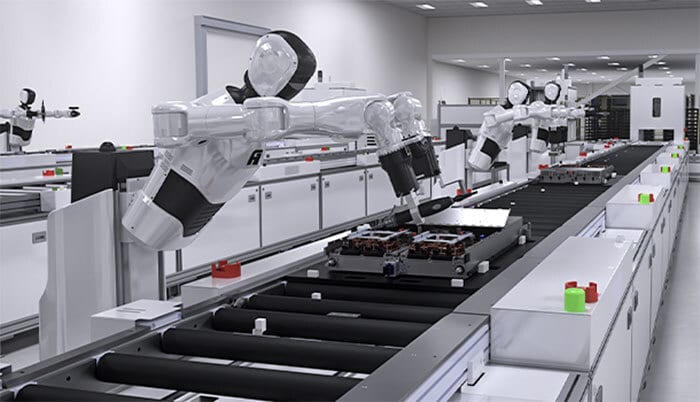

NEW YORK—The integration of artificial intelligence into robotics is poised for significant expansion in the near future, according to a new report by ABI Research. “The Landscape of AI in Industrial and Collaborative Robotics” reveals that AI-augmented machines have already achieved the technological maturity required for widespread adoption.

This pivotal moment means that factory robots will soon be tackling complex, dynamic and dexterous tasks previously unattainable with traditional automation.

“For years, the robotics industry has grappled with the ‘sim-to-real’ gap and the over-promising of nascent AI,” says George Chowdhury, senior analyst at ABI Research. “Our deep dive into the technology’s readiness confirms that robust algorithms, particularly in Dynamic Policy Adjustment (DPA) and emerging Robotics Foundation Models, are now capable of delivering on the promise of true adaptive automation.

Industrial robots will soon be tackling complex, dynamic and dexterous tasks previously unattainable with traditional automation. Photo courtesy Nvidia

“This isn’t about incremental improvements; it’s a paradigm shift where robots can finally adapt to the unpredictable real world, moving beyond rigid programming to genuinely intelligent, adaptive execution,” claims Chowdhury

While existing static manufacturing processes continue to be served by traditional robotics, Chowdhury believes that the significant growth potential lies in under-automated markets and workflows requiring sophisticated, heterogeneous dexterous manipulation. This includes specialized sectors where the ability to handle variability is paramount. Potential applications range from niche high-value manufacturing applications, such as semiconductor production, to life sciences, logistics and warehousing.

According to Chowdhury, key advancements reshaping robotics include reinforcement learning, robot foundation models, large language model (LLM) interfaces for human-robot interaction (HRI), new SLAM and world models, agentic AI, DPA platforms, and machine vision algorithms. The ABI Research report assesses the maturity of these technologies and identifies leading innovators driving progress in each area.

Companies advancing adaptive automation and DPA include Apera, Cambrian AI, InBolt, Nvidia, Robovision, Summer Robotics, T-Robotics and V-SIM.

In machine vision hardware and vertical AI-robotics, Chowdhury says notable players include Augmentus, Basler, Cognex, Intel RealSense, Mech-Mind, Nikon, OnRobot, SICK, Solomon3D, Universal Robots and Zebra.

“Additionally, new robotics foundation models from Covariant, Dexterity, Field AI, Google DeepMind, Intrinsic, Meta, Physical Intelligence and Skild AI signal a shift toward more capable and adaptable autonomous systems,” claims Chowdhury.

“The critical challenge now is translating this technical readiness into widespread commercial adoption,” explains Chowdhury. “Vendors must prioritize usability, transparency and clear ROI metrics to overcome economic uncertainty and market skepticism.”

GM Joint Venture Debuts New Auto Manufacturing System

LIUZHOU, China—SAIC-GM-Wuling (SGMW) has ramped up a new automotive production process that replaces the traditional assembly line with a fully flexible, AI-driven, advanced manufacturing model that provides enhanced efficiency, precision and quality. The Intelligent Island Manufacturing System (I²MS) is a radical departure from the century-old linear line paradigm.

SGMW claims that the new flexible island design in its factory results in a 30 percent increase in manufacturing efficiency and a 31 percent reduction in unit costs, in addition to 100 percent full-lifecycle product data traceability. I²MS also reduces product development cycle by 43 percent.

The Intelligent Island Manufacturing System is 30 percent more efficient than traditional automotive assembly lines. Illustration courtesy SAIC-GM-Wuling

“With I²MS, we’re building a system that gives us more room to adapt, faster responses to market shifts, better ways to uphold quality and the flexibility needed in China’s fast changing intelligent new energy vehicle era,” says Vincent Wong, executive vice president of SGMW. “It reflects our ongoing efforts to explore what the future of intelligent manufacturing could look like.

“Combining decades of manufacturing expertise with digital transformation strategy, [our] unique I²MS represents a fundamental reinvention of the traditional automotive linear assembly model,” claims Wong.

The system restructures conventional lines into multiple independent assembly islands across manufacturing processes. Automated, intelligent guided logistic vehicles coordinate real-time component delivery across islands, enabling multiple processes to run in parallel.

“The body plant also hosts a cluster of manufacturing innovations and massively adopts leading technologies, including 3D vision, laser radar measurement, and AI-powered automated inspections to improve accuracy and achieve consistent quality across every vehicle,” adds Wong.

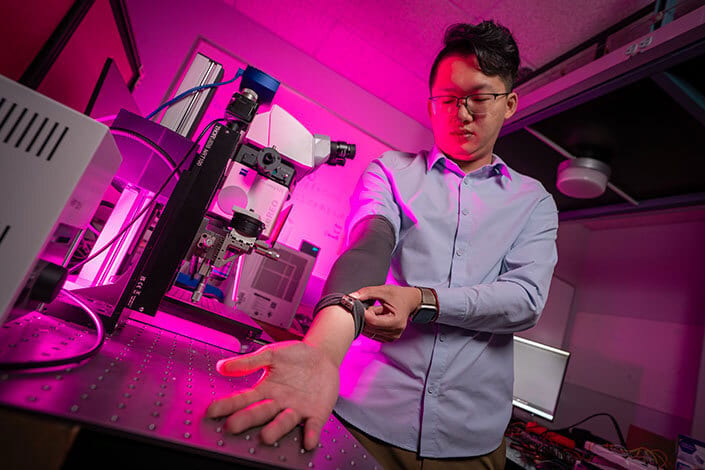

Wearable Device Can Control Machines While in Motion

SAN DIEGO—Engineers at the University of California San Diego have developed a next-generation wearable device that enables people to control robots and other machines using everyday gestures. It combines stretchable electronics with artificial intelligence to overcome a long-standing challenge in wearable technology: reliable recognition of gesture signals in real-world environments.

Wearable technologies with gesture sensors work fine when a user is sitting still, but the signals often start to become distorted with excessive motion noise.

The new device is a soft electronic patch that is glued onto a cloth armband. It integrates motion and muscle sensors, a Bluetooth microcontroller and a stretchable battery into a compact, multilayered system.

The device was trained from a composite dataset of real gestures and conditions, from running and shaking to the movement of ocean waves. Signals from the arm are captured and processed by a customized deep-learning framework that strips away interference, interprets the gesture, and transmits a command to control a machine—such as a robotic arm—in real time.

A next-generation wearable device enables people to control robots and other machines using everyday gestures. Photo courtesy University of California San Diego

“This advancement brings us closer to intuitive and robust human-machine interfaces that can be deployed in daily life,” says Xiangjun Chen, Ph.D., a postdoctoral researcher working on the project. “By integrating AI to clean noisy sensor data in real time, the technology enables everyday gestures to reliably control machines even in highly dynamic environments.”

People used the wearable device to control a robotic arm while running, exposed to high-frequency vibrations and under a combination of disturbances.

“This work establishes a new method for noise tolerance in wearable sensors,” claims Chen. “It paves the way for next-generation wearable systems that are not only stretchable and wireless, but also capable of learning from complex environments and individual users.”

According to Chen, industrial workers and first responders could potentially use the technology for hands-free control of tools and robots in high-motion or hazardous environments. “It could even enable divers and remote operators to command underwater robots despite turbulent conditions,” he points out. “In consumer devices, the system could make gesture-based controls more reliable in everyday settings.”

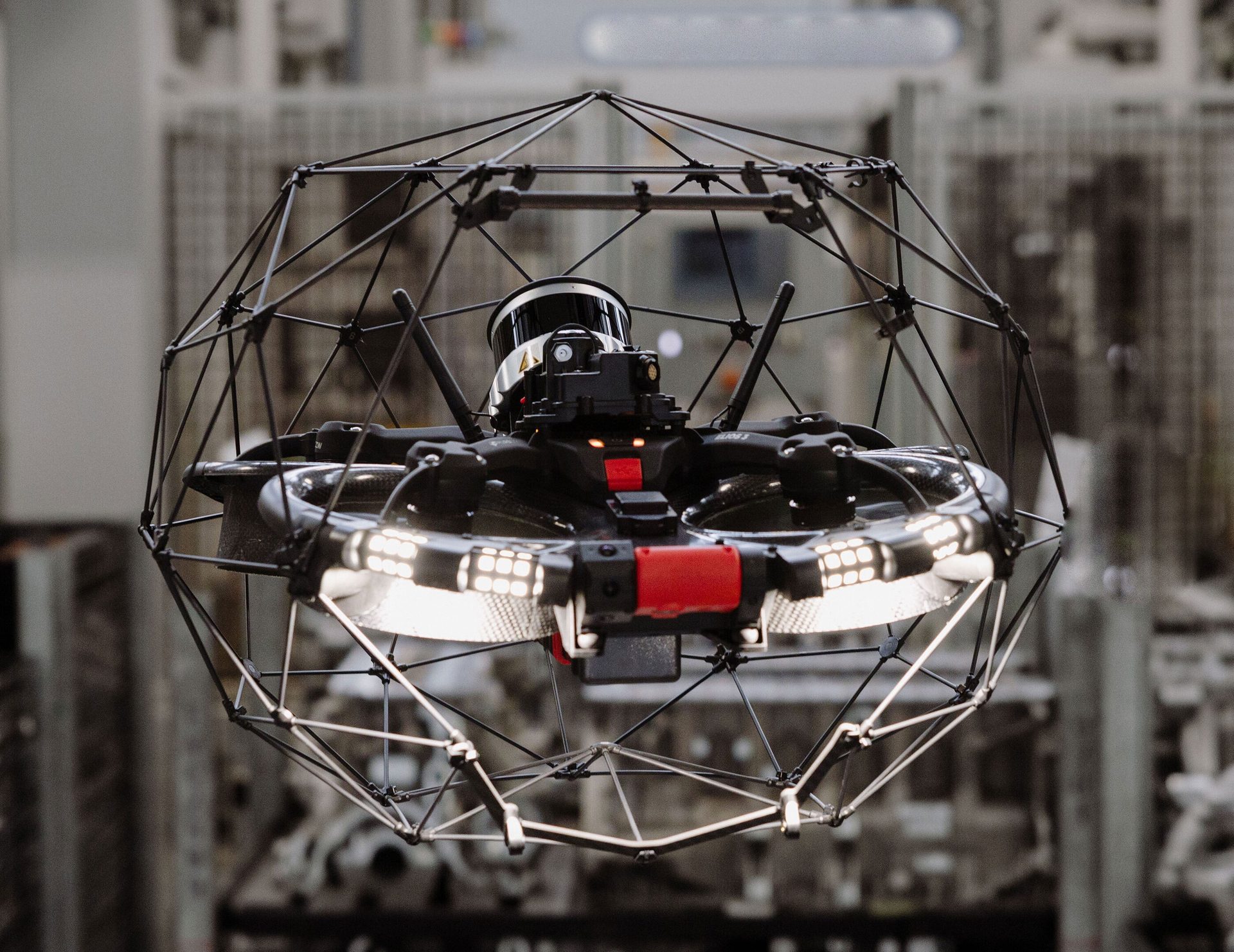

JLR Deploys Drones to Improve Safety and Efficiency

GAYDON, England—Engineers at JLR, the manufacturer of Jaguar and Land Rover vehicles, are using drones at the company’s Electric Propulsion Manufacturing Centre (EPMC) in Wolverhampton. The initiative has reduced machinery and site inspection time by 95 percent.

The Elios 3 drone by Flyability reaches high and confined spaces, allowing maintenance teams to inspect equipment safely from the factory floor, eliminating the need for elevated platforms and reducing risk.

Operated via tablet, the drone delivers a live 3D map to identify and troubleshoot issues. This helps JLR avoid costly maintenance downtime, while also freeing up employees’ time to focus on additional business critical tasks.

JLR is using indoor drones in its facilities for site inspection and maintenance applications. Photo courtesy JLR

“As we transform our facilities, we’re rethinking every part of our factories, including how we maintain and operate them,” says Nigel Blenkinsop, executive director of industrial operations at JLR. “Advanced drone technology is helping us improve employee safety, reduce maintenance downtime and operate more efficiently.

“Just as importantly, [it’s] helping upskill our people in the latest digital technologies, ensuring our teams are part of our factories of the future,” explains Blenkinsop.

The drone is equipped with lidar sensors that enable engineers to create detailed 3D maps of the surrounding environment. In addition, a thermal camera helps pinpoint overheating components or insulation failures, helping optimize energy use by detecting inefficiencies early and supporting JLR’s efforts to reduce its overall operational emissions.

Following successful trials at EPMC, the drone will be deployed at JLR’s logistics operations center in Solihull. It will be equipped with bar code scanners to automate inventory checks, replace manual processes and enable faster, more accurate stock updates.

“This will help improve safety, reduce errors and support smarter decisions on space, stock levels and supply flow,” says Blenkinsop.